Toycoin Part 6b: Nodes

Note: the toycoin series of posts is for learning / illustrative purposes only; no part of it should be considered secure or useful for real-world purposes.

TLDR: network programming is… hard.

In particular, I find it challenging to reason about asynchronous (concurrent) code, as well as threads, as it’s difficult to come up with clean, function-style, linear state transitions.

From the prior post, it took some more reading and a helpful StackOverflow answer to develop something barely passable as a blockchain node. It is really only barely passable – the many problems noted in the body and conclusion of this post.

Node Main Loop

A toycoin node consists of two logical parts:

- A

block_workercoroutine, which listens to a queue of transactions. When enough transactions are accumulated, it attempts to generate a block (in a separate thread, as the proof-of-work is f CPU-intensive, blocking function). - A

handle_datacoroutine, which listens to network messages, such as transactions and blocks, and updates the node state as appropriate.

async def main(args):

"""Main."""

me = uuid.uuid4().hex[:8]

print(f'Starting up {me}: Full Node')

reader, writer = await asyncio.open_connection(args.host, args.port)

print(f'I am {writer.get_extra_info("sockname")}')

channel = args.channel

print(f'Node on channel {channel}')

await send_msg(writer, channel.encode())

txn_queue = Queue()

asyncio.create_task(block_worker(txn_queue, writer, channel, args.delay))

try:

while data := await read_msg(reader):

handle_data(data, txn_queue)

except asyncio.IncompleteReadError:

print('Server closed.')

finally:

writer.close()

await writer.wait_closed()

Conceptually, this works reasonably well: the data handler updates the transaction queue and node state, while the independent block worker asynchronously monitors the queue and calls blocking work in a separate thread at its discretion.

Data Handler

The data handler is straightforward. The primitive semantic protocol is to use four characters to identify the payload type, followed by the payload.

def handle_data(data: bytes, txn_queue: Queue):

"""Data handler."""

print(f'Received message type: {data[:4].decode()}')

if data[:4] == b'TXN ':

txn_pair = serialize.unpack_txn_pair(data[4:])

handle_txn(txn_pair, txn_queue)

elif data[:4] == b'BLOC':

blocks = serialize.unpack_blockchain(data[4:])

handle_blocks(blocks)

else:

print(f'Could not handle message type {data[:4].decode()}')

The handle_txn just checks pairs of (tokens, txn) for local validity before putting them onto the queue. The queue is an asyncio.Queue queue of infinite size, so there is not too much to worry about.

Blocks, on the other hand, are stored in a global variable, and require some more thought.

def handle_blocks(blocks: block.Blockchain):

"""Handle blocks.

Update node blockchain if blocks are valid and form a longer chain.

"""

global BLOCKCHAIN

if len(blocks) > len(BLOCKCHAIN) and block.valid_blockchain(blocks):

print('Received longer, valid blockchain.')

BLOCKCHAIN = blocks

else:

print('Received blockchain but it is not longer, or invalid.')

When a new block is received, either:

- the block worker has worked on the next block (of presumably same or similar transactions), and has completed the work; or

- the block worker has not yet completed the block

The data handler cannot directly observe the block worker, but we know that the block worker will only update the node’s global blockchain from a coroutine (and not an independent, proof-of-work thread). So we just use the blockchain’s consensus heuristic, adopting the longest chain at that moment.

Note that the protocol (very inefficiently) sends the entire blockchain whenever there’s an update. Also, valid_blockchain does not verify the absence of double spending in blocks generated by other nodes – a major flaw! So there’s lots of room for improvement in both security / correctness and performance.

Block Worker

The block worker runs another forever loop, and “wakes up” when a new transaction is received from the queue.

If tokens used in transaction are valid, with no double-spending, the transaction is added to the local list of transactions to process. When there are at least two transactions, the node attempts to generate a block by calling gen_block, which will run the proof-of-work in a separate thread.

`async def block_worker(txn_queue: Queue,

writer: asyncio.StreamWriter,

channel: str,

delay: int):

"""Queue manager for generating blocks."""

txn_pairs : List[transaction.TxnPair] = []

while True:

txn_pair = await txn_queue.get()

if valid_tokens(txn_pair, txn_pairs):

txn_pairs.append(txn_pair)

if len(txn_pairs) >= 2:

txns = [txn for _, txn in txn_pairs]

b, txns_ = await asyncio.to_thread(gen_block, txns)

await asyncio.sleep(delay) # slow some nodes down artificially

if b and block.valid_blockchain(BLOCKCHAIN + [b]):

await update_blockchain(b, writer, channel)

txn_pairs = update_txn_pairs(txn_pairs, txns_)

else:

print('Invalid block or blockchain')

print(f'Dropping txns:\n{show.show_txn_hashes(txns)}\n')

txn_pairs = []

If a block is generated successfully, it will be broadcast to the network and will update the node’s global blockchain. Note that if updated notes have been received while the node was generating its latest block, block.valid_blockchain(BLOCKCHAIN + [b] will fail due to invalid hashes, and the block will be discarded.

The above works reasonably well for a single node, running in isolation. What hapepns, though, when the blockchain is updated at inconvenient times for the worker? For example:

- the transactions in the local

txn_pairslist, awaiting processing, may already be incorporated into blocks by the timegen_blockis called – resulting in wasted CPU effort - the node drops transactions after

gen_blockfails to generate a block – which may be ok if the latest block generated by another node has included them, but there’s no such guarantee

So, once again for both correctness and efficiency, there remains much room for improvement in more granular syncinc of the worker’s state with the node’s blockchain.

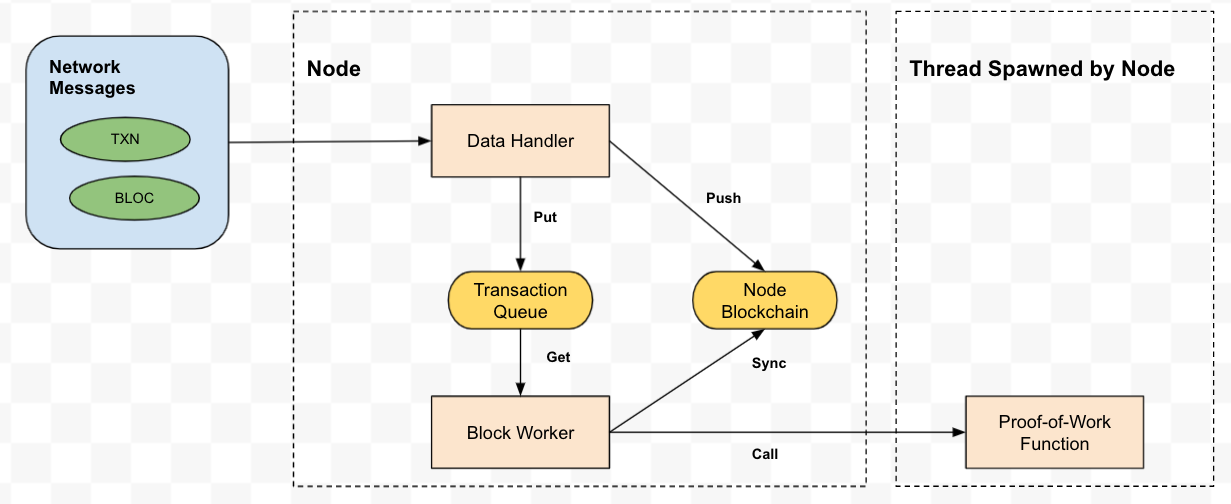

Logical Diagram

The logical diagram of the node, as decribed so far, looks something like this:

Testing

There are probably some frameworks for network-oriented testing. The other helpful tool is a nice fton-end for visualizing activity (Elm via websockets?), which might be a fun follow-up for this project.

For now, there’s old-fashioned priting in the terminal.

The relay node shows various clients connecting, nad messages being sent…

% python relay.py

Remote ('127.0.0.1', 52655) subscribed to b'/topic/main'

Remote ('127.0.0.1', 52656) subscribed to b'/topic/main'

Remote ('127.0.0.1', 52657) subscribed to b'/topic/main'

Remote ('127.0.0.1', 52658) subscribed to b'/connect'

Sending to b'/topic/main': b'TXN [[], "{\\"previo'...

Sending to b'/topic/main': b'TXN [[], "{\\"previo'...

[...]

Sending to b'/topic/main': b'BLOC["{\\"header\\": '...

Sending to b'/topic/main': b'BLOC["{\\"header\\": '...

Sending to b'/topic/main': b'TXN [["{\\"txn_hash\\'...

As with the previous post, the transaction oracle submits (valid) transactions to the network at random intervals…

% python txn_oracle.py (master)toycoin

Starting up b79fe4af: Transaction Oracle

I am ('127.0.0.1', 52725)

Generating new transaction...

Wallet balances: 100, 50, 25, 10

Sending from wallet 2 to 0: 8

Wallet balances: 108, 50, 17, 10

Generating new transaction...

Now, we start two nodes, one with no delay, and another with an artificial delay of 5 seconds in its block worker loop (i.e. simulating a node with less CPU).

First, output from the regular node:

% python node.py

Starting up 4886adee: Full Node

I am ('127.0.0.1', 52722)

Node on channel /topic/main

Received message type: TXN

Received message type: TXN

Starting block gen...

Received message type: TXN

Received message type: TXN

Finished block gen, hash b'\x00\xa5\xce\xa2\xd5\xff\xc8\x14(\xf2:\xe2 \xbfu6\x89\xb7=2\x8c\x0f\x96$\x0c\xc7\x13\xa3MaY\xee;\xe0\xb9\x01K\xe4a\xf1L\x0e\xe2\xa1\x1aH\xef\rL7\xed\xf2~"V\x991\xefh\xb0\xf1\xb9IV'

Block has 2 txns

Sent updated blockchain

Starting block gen...

Finished block gen, hash b'\x00\xcc\x92KM\xb9\xad\x9fVx\xfa[\xa4R\xb4\xb4\xa3\xe3\x89y\xf6\x85\xbe\xaeS\xea\x17t\n\xd3\xed\x1e\xe14u\xb8\xad`\xace\xf5\xd3\x95\xc3APW\x07\x97\x9e\xefgO\xc9 \xd4V\x15]"N\xa6\xfcS'

Block has 2 txns

Received message type: BLOC

Received blockchain but it is not longer, or invalid.

Sent updated blockchain

[...]

Ti’s a bit hard to follow with the interleaved messages from the coroutines, but we observe two blocks being created by this node and broadcast.

On the other hand, the delayed node arrives at the same hash for the first block, but it’s too late, so it drops the transactions used for that block.

`% python node.py --delay=5

Starting up 39355b53: Full Node

I am ('127.0.0.1', 52723)

Node on channel /topic/main

Received message type: TXN

Received message type: TXN

Starting block gen...

Received message type: TXN

Received message type: TXN

Finished block gen, hash b'\x00\xa5\xce\xa2\xd5\xff\xc8\x14(\xf2:\xe2 \xbfu6\x89\xb7=2\x8c\x0f\x96$\x0c\xc7\x13\xa3MaY\xee;\xe0\xb9\x01K\xe4a\xf1L\x0e\xe2\xa1\x1aH\xef\rL7\xed\xf2~"V\x991\xefh\xb0\xf1\xb9IV'

Block has 2 txns

Received message type: BLOC

Received longer, valid blockchain.

Received message type: BLOC

Received longer, valid blockchain.

Invalid block or blockchain

Dropping txns:

-> C7URVxu/yZ...

-> cz/piogZqv...

The listener node has more verbose output. Here is the blockchain it receives after two blocks have been generated. We can see, for example, that the two transaction hashes dropped by the slow nodes are the same as those in the first block:

Received BLOC:

--------------------------------------------------------------------------------

Blockchain

Blocks: 2 | Total Txns: 4 | Valid: True

Block 0 Header:

{

"merkle_root": "Aed9l4j3eX...",

"nonce": "NjMx...",

"previous_hash": "n60gOVqBWm...",

"this_hash": "AKXOotX/yB...",

"timestamp": "MTYyODcwOT..."

}

Txns Hashes:

-> C7URVxu/yZ...

-> cz/piogZqv...

Block 1 Header:

{

"merkle_root": "AYjXQ0N73n...",

"nonce": "MTg0...",

"previous_hash": "AKXOotX/yB...",

"this_hash": "AMySS025rZ...",

"timestamp": "MTYyODcwOT..."

}

Txns Hashes:

-> KW4drLNIOA...

-> 5+xMYKNvhs...

Transmission ended.

Wrapping Up

There really are many notable feature issues / gaps… to name just a few:

- Coinbase transactions are backdoored for validation (how do proper protocols handle coinbase transactions, and genesis blocks?)

- The blockchain validation and syncing protocol is extremely inefficient, and the validity of tokens in blocks computed by other nodes is not verified beyond correct hashes, etc

- The network protocol is too primitive, e.g. nodes receive their own broadcasts without knowing they are themselves the sender

- Not real P2P (relying on a relay node for broadcasting)

- The transaction oracle doesn’t wait for transactions to be included in blocks (a requirement for honest nodes to attest to their validity)

- There is no SPV (simplfied payment verification) implementation

As always, reality has a surprising amount of detail!

But the complexity of such detail is often not surfaced without first-hand experience. So in many cases (e.g. where there are low risk / costs), it really makes sense to just dive in and try things. It’s always valualbe to know a little, and maybe more importantly to develop a sense of what you don’t know, which helps you look in the right places if you ever really need to know (!).

This is a good stopping point for now. The door is open in the future for a front-end visualization of network activity, as well as a more comprehensive protocol update.